|

When we first started helping clients launch their web applications on AWS in 2008, Amazon hadn't yet launched their Elastic Load Balancing (ELB) service, so we developed our own load balancing stack using open source software (Nginx + HaProxy).

It was exciting to see Amazon launch a ready to use service in 2009 - we generally advised our clients to use ELB where it met their needs, but in some cases it didn't offer the features required. At the time of initial launch, ELB had some limitations (as would be expected with a brand new offering) with respect to load balancing algorithm options, sticky sessions, URL parsing, virtual hosts, SSL/TLS connections, and multiple backend pools. Amazon has since solved many of those issues, but a good number of use cases still exist that require a more advanced load balancing solution (which we provide to some of our clients). However ELB remains a great solution for many use cases, as it integrates terrifically with auto scaling groups and saves our clients a great deal of work at a price that's a fraction of traditional load balancing solutions. With that all said, we're super excited to see Amazon launch a new generation of load balancers, the Application Load Balancer (ALB). With this launch Amazon finally adds support for URL parsing and multiple backend pools. Many of our clients are particularly excited for WebSocket and HTTP/2 support. We're still evaluating ALB for use in container-centric environment, as we're still recommending open source solutions (e.g., haproxy and Traefik), but Amazon has designed ALB to support these work loads, and we expect that it will fit many of our clients’ needs. Anybody running web applications on AWS would benefit from exploring Amazon’s ALB as a potential load balancing solution - you can learn more from the official announcement, or feel free to reach out as we’d love to help guide you along the way. Load balancing is just one of the tools available on AWS that facilitates scaling applications to the largest of sizes. Brandorr Group is an Advanced AWS Consulting Partner with decades of experience architecting and scaling applications, please contact us today about your scaling needs.

0 Comments

Foreman 1.10 is ready! The greatest news is that Smart-proxy DNS is now pluggable! It is very useful improvement, because this enables third party DNS services, like Amazon Route53 and PowerDNS. We're testing it right now, and are really excited about this, as we use Route53 as well as DNSMadeEasy, which we hope to add support for in the future.. This version brings lots of other new highlights, the most noticeable of which are:

One of the questions we regularly get, is which Linux AMI should I use at Amazon EC2? To answer this question, there are a number of questions you'll need to ask yourself to narrow it down.

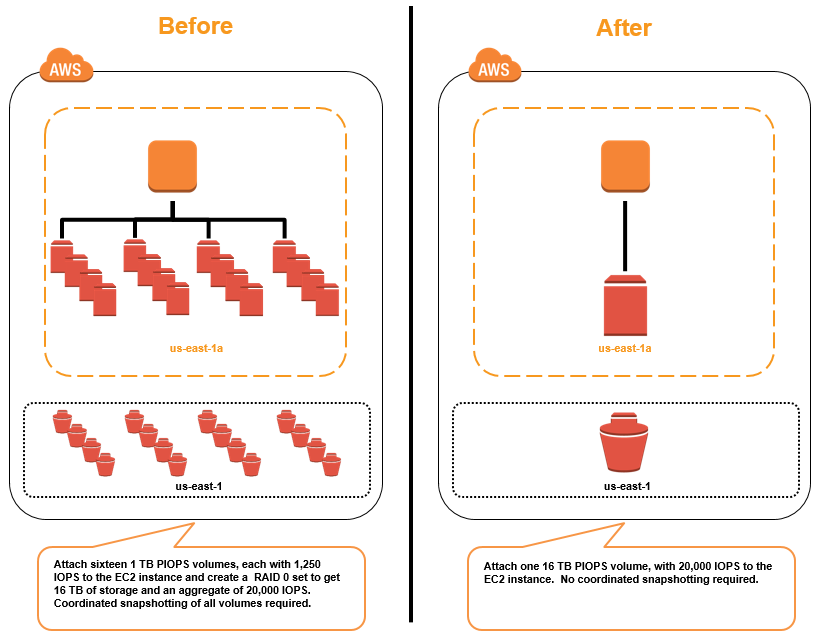

1) Which Linux distro do you want to run? You likely want to run one of the two most popular distros on EC2.: a) Ubuntu Server (LTS release) - This is clearly EC2 users' favorite OS, and is running on more instances than all others combined. Most support channels, including self-service forums, are the most robust for Ubuntu. Paid support is available. b) CentOS - If you are looking for a RedHat based distro, that is well supported on Amazon, and you are NOT a RHEL subscriber this will be your distro of choice. RedHat now officially funds development of CentOS, so it can be a very valid choice, particularly if you are attracted to the 10-year support lifecycle. Other popular distros that are options, should be probably only be used only if you are an existing user of these distros, as they aren't nearly as popular as Ubuntu and CentOS, so you might run into issues that others haven't. These distros include: c) RedHat Enterprise Linux e) Debian f) SUSE Enterprise Linux Then there is Amazon Linux. While Amazon linux is a fine stable distro, that comes preconfigured with many AWS tools, we have difficulty recommending it, because it can't run outside of Amazon's cloud. You may think you do not care, as you plan to run all your infrastructure on EC2, but many modern development workflows require running local VMs that mirror your production environment on developer laptops (think vagrant). Amazon Linux isn't available outside of Amazon's cloud. Also, if you have a mixture of on-premise and cloud servers, again you can't run the same OS everywhere. Of course, if you are running a container-based workflow, the latest Amazon Linux has full support for Docker, which powers the Amazon EC2 Container Service. If you want to use Docker, and use the Amazon EC2 Container service to get going, it's a perfectly valid choice to use the Amazon ECS-optimized AMI, which is basically Amazon Linux preconfigured with Docker and the EC2 container agent. That said, most distros support Docker today, and we tend to steer people with heavy container-based workflows, towards building their own clusters using distros like CoreOS that were built from the ground up to only host containers. 2) 32-bit or 64-bit? Although there are a few edge cases where a 32-bit AMI makes sense, the answer is now almost invariably 64-bit, as every instance type can run a 64-bit AMI, so you have the most flexibility to resize your instance at a later date. 3) PV or HVM? If you are new to AWS, please choose an HVM AMI, as all new instance types will support HVM, and PV is being phased out. If you aren't new to AWS, you'll have to make the decision on a case by case basis, but in most cases you'll want to choose an HVM AMI so that you can take advantage of the newer instance types that provide higher performance, and better cost effectiveness. 4) EBS or instance-store? In almost all cases you want EBS backed AMIs, as they give you much more flexibility, in that they allow you to turn off the instance without losing the data stored on the root volume. This provided you the ability to easily resize your instances. In summary, in all all likelihood you'll want to be running a 64-bit, EBS-backed, HVM AMI that's running a popular Linux distribution like Ubuntu LTS, or CentOS. During last year’s AWS re:Invent conference, AWS announced their plan to support larger EBS volumes - at the time the maximum EBS volume size was 1TB. If a user needed to create volumes and file systems larger than 1TB, they had to rely on drive aggregation techniques like software RAID or drive concatenation. This increased complexity, costs and risk. Today, AWS announced the availability of the promised larger and faster EBS volumes. Now you can create:

--

Brandorr Group is an Advanced Amazon Web Services Consulting Partner with decades of experience architecting, automating, and managing cloud infrastructure using AWS best practices, which includes database backups and disaster recovery. We help our clients successfully execute their cloud strategy by providing architecture and implementation services with 24x7x365 oncall emergency response. We’d love to help with your automation/scaling needs, contact us today. Amazon finally released EBS volumes that are larger than 1TB, a feature many have been waiting for, for years. Read the announcement for more details, but the quick summary is, that you can now provision SSD (gp2 and io1) EBS Volumes up to 16TB in size, with up to 20,000 Provisioned IOPS:

|

AuthorBrandorr Group LLC is a one-stop cloud computing solution provider, helping companies manage growth and ship new projects using cloud and scalability best practices.

Recent Posts

June 2019

|

Location3117 Broadway

Suite 50 New York, NY 10027 Contact UsWe'd love to hear from you,

please feel free to call us: 866.991.4838 |

About BrandorrWith decades of experience in cloud technologies and specialties in high volume/throughput, high availability, and disaster mitigation engineering, Brandorr Group has the experience to help customers of all sizes develop, deploy and manage their new or existing infrastructure in the cloud.

By using provisioning and configuration management technologies such as Docker, Ansible, Chef, Puppet, Terraform, and CloudFormation, we are able to quickly and cost-effectively scale and deploy infrastructure projects of any size. Additionally, Brandorr maintains 24x7 systems engineering, security and monitoring teams augmented by database administrators and software developers to ensure projects are delivered and systems remain highly available while maintaining performance. |